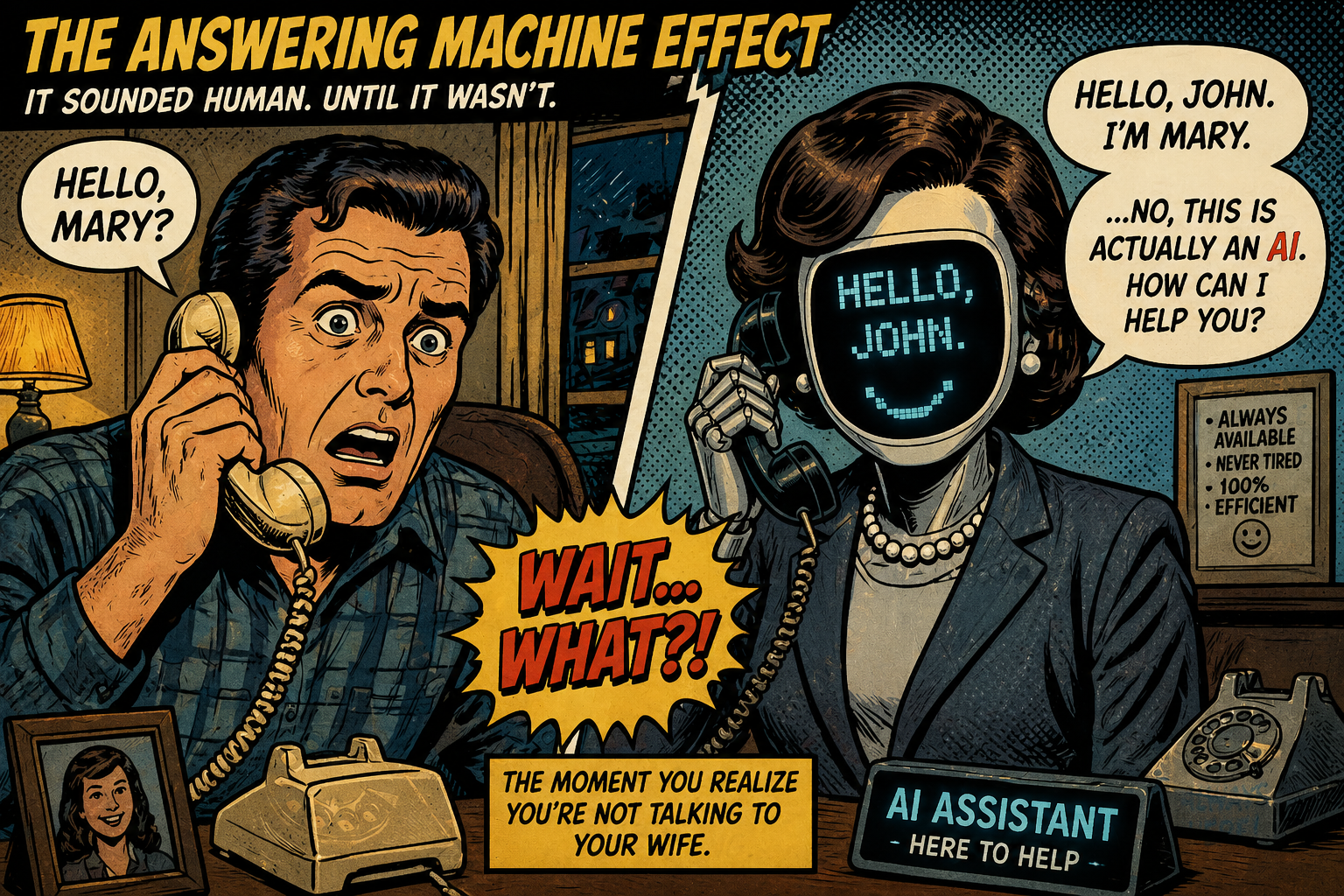

The Answering Machine Effect

Why you already know what you’re about to read isn’t real and what to do about it.

Imagine a small red badge on every article, every design, every conversation: AI-Generated Content.

Before you read a single word, you feel it: a tiny click of disengagement, an instinctive pullback. You wouldn’t press “Accept cookies” without thinking, but you’d skip the AI label without even deciding.

Most people already know what they’d do. Close the tab. That reaction isn’t new. Let me take you back to the first phones.

A friend sets their voicemail to sound like a real answer.

“Hello?” A pause. “Hi, I’m here.”

You speak. For a breath, you’re in a conversation. Then the timing wobbles. Your words don’t quite land. And the frame collapses: you’re not talking to anyone. You’re caught in a recording.

The words were fine. Clear, even. What broke wasn’t comprehension. It was presence. In half a second, your mind reclassified the voice from person to stored artifact. And the moment that happened, your attention fled, your goodwill evaporated, and the interaction became a chore.

That’s the Answering Machine Effect. Not a judgment of quality. A withdrawal from absence.

The Three-Second Collapse

Every time the effect triggers, the same rapid sequence fires:

- Assumption of presence. You believe a live mind is behind the words.

- Flicker of doubt. Timing, flatness, an uncanny completeness something whispers off.

- Collapse of the frame. You know, pre-consciously, that no one is there.

graph LR

A[📞 Expectation of<br/>Human Presence] --> B[🎙️ Exposure to<br/>Coherent Output]

B --> C{🔍 Detection of<br/>Structural Cues}

C -- Timing, flatness, completeness --> D[🏷️ Reclassification:<br/>Generated Artifact]

D --> E[⚡ Pre-Reflective<br/>Withdrawal]

E --> F[🚫 Engagement<br/>Collapses]

classDef start fill:#e6ffe6,stroke:#2e7d32,stroke-width:3px,color:#004d40

classDef detect fill:#fff3e0,stroke:#f57c00,stroke-width:3px,color:#bf360c

classDef negative fill:#ffebee,stroke:#c62828,stroke-width:3px,color:#b71c1c

classDef final fill:#fce4ec,stroke:#880e4f,stroke-width:3px,color:#4a0024

class A start

class B,C detect

class D,E negative

class F final

Notice that nowhere in this sequence do you check grammar, fact-check, or weigh the argument. The collapse is pre-reflective. It arrives in milliseconds. And once it hits, it is very hard to undo.

The emotion that follows is unmistakable: a spike of irritation, a withdrawal of trust, a sudden sense that the exchange was misrepresented. “Fraud” is too strong a word, there’s rarely ill intent. But the structure of the feeling is the same as any small betrayal: I thought you were there, and you weren’t.

AI Puts This Mechanism on a Hair Trigger

Fast-forward to today. The same mechanism now operates on every piece of content.

Scenario A: Chatbot or human?

You’re offered two choices for a task that requires judgment or trust. Most people, most of the time, prefer the human not because the AI is worse, but because the human implies presence, accountability, the possibility of being understood. When the stakes are low and routine, the chatbot wins for speed. But the moment interpretation matters, what it is: human or machine, becomes the deciding variable.

Scenario B: The AI label

A document is well-written, accurate, and clearly labeled AI-Generated Content. The label changes nothing about the text and yet it changes everything. In contexts where meaning, opinion, or identity are at stake, the label immediately lowers engagement. Readers reclassify the piece before they even begin. They do it without thinking.

That’s the Answering Machine Effect at scale. It doesn’t wait for evaluation. It happens before the first sentence.

Why We Recoil

This isn’t irrational. It’s a compound reaction with deep roots.

- Aversion to the non-living. We pull back from things that look like agency but have none. It’s the same instinct that makes us distrust a limp handshake or a smile that stops at the mouth.

- Expectation violation. We expect a mind. We get a machine. The gap stings.

- Absence of struggle. Human thought leaks. It hesitates, revises, contradicts itself. AI output arrives frictionless, fully resolved. The lack of visible process feels like a missing heartbeat.

- Effort heuristic. We value what cost someone effort. When content costs nothing to produce, we treat it as worth nothing to consume.

- Attention under abundance. In a flood of content, we filter by its source. “AI-generated” becomes a quick-skip signal, the same way “sponsored” does.

- Loss of reciprocity. We’re built for exchange. When we realize no one is on the other side, participation feels pointless.

Those factors combine into a single, fast verdict: This isn’t for me. And that verdict is extraordinarily persistent.

This mechanism isn’t limited to content. It’s the same instinct we use to judge people and it operates just as fast.

The Person You Will Never Like

Everyone has experienced this. You meet someone for the first time. Nothing overtly wrong happens. No argument, no clear mistake. But something doesn’t land.

The handshake feels off. The eye contact is wrong. The timing of the conversation doesn’t quite match.

And within seconds, a judgment forms:

I don’t like this person.

What’s important is how little information that judgment is based on.

It’s not a considered evaluation of their character. It’s not a measured assessment of their ideas.

It’s a rapid, instinctive reaction to the way the interaction feels. And once that impression forms, it’s remarkably persistent.

Even if the person is polite, what they say is reasonable, and objectively there is nothing wrong.

The initial signal tends to dominate.

You don’t engage as openly. You don’t offer the same goodwill. You don’t lean into the interaction.

Something has already closed.

This doesn’t just happen with individuals. It happens with politicians, celebrities, public figures, anyone presented through a limited interaction. A short clip, a single interview, even a photograph can be enough to trigger the response.

The content of what they say matters less than how they are perceived in that first moment.

The important point is this:

the reaction is not primarily about correctness or quality it is about fit, presence, and instinct

This is the same mechanism at work in the Answering Machine Effect.

In one case, you meet a person and something feels off. In the other, you encounter content and something feels absent.

In both cases, the result is the same:

a fast, pre-reflective withdrawal from the interaction

You don’t need to prove the person is wrong. You don’t need to analyze the content.

The decision is already made.

That’s why the effect is so difficult to reverse.

Because by the time you start thinking about it, the judgment has already happened.

We Want a Story, Not a Signal

Human communication is not only the transfer of information. It is the trace of a path: what someone saw, what they struggled with, what changed their mind, and why the result mattered enough to say. You’re not just reading the answer. You’re understanding the path that led to it.

Even when we do not ask these questions directly, we listen for them. We listen for hesitation, revision, emphasis, contradiction, and evidence that a mind has moved through something before arriving at an answer.

That is what gives communication weight.

AI systems, as they are often used, suppress this dimension. Their outputs are resolved rather than evolving, coherent without visible revision, and detached from any lived trajectory. They provide signal, but not story.

The information may be correct. It may even be useful. But it can still feel hollow because nothing seems to have happened to produce it.

This connects directly to the Answering Machine Effect. The AI output doesn’t fail. It reveals what was never there.

It is a voice without a trajectory.

We do not just want answers. We want to know what it cost to arrive at them.

That is why AI used as substitution often feels empty, while AI used as part of an ongoing human process can feel different. The question is not whether AI was involved. The question is whether the communication still carries the marks of a path taken.

The Compression Stack: why perfect AI still smells synthetic

Here’s the deeper reason the effect won’t vanish with better models. Every AI output is the result of a triple compression:

graph LR

A[🧠 Human Thought<br/>Embodied, irregular, contextual] -->|Step 1: Symbolic Compression| B[💬 Language<br/>Linear, shared, lossy]

B -->|Step 2: Dataset Aggregation| C[📊 Training Data<br/>Pattern averaging, edge smoothing]

C -->|Step 3: Model Inference| D[🤖 AI Output<br/>Statistically plausible, frictionless]

classDef human fill:#e8f5e9,stroke:#2e7d32,stroke-width:3px,color:#1b5e20

classDef transition fill:#fff8e1,stroke:#f9a825,stroke-width:3px,color:#e65100

classDef machine fill:#fce4ec,stroke:#c62828,stroke-width:3px,color:#b71c1c

class A human

class B,C transition

class D machine

Thought is chaotic, situated, embodied. Language flattens it. Training data averages what remains. The model’s output smooths away the last edges. What you receive is statistically plausible but structurally improbable as lived experience. It’s not that the output is wrong. It’s that it didn’t fight to exist.

Humans don’t detect that something is wrong. They detect that nothing resisted being made.

That absence of resistance is the fingerprint of the generator. And it’s detectable even when the output is flawless.

The human premium

This changes how value works. When content is abundant and nearly free to produce, the content itself stops being the differentiator. What matters is perceived origin.

Interactions that feel human acquire a premium not because they’re more accurate, but because they carry presence, responsiveness, and the possibility of being corrected. I call this the human premium. It’s the inverse of the Answering Machine Effect. One is the sting of absence; the other is the extra weight granted to anything that feels genuinely inhabited.

In practice, this means: if you’re building a product, a service, or a career, the places where you put a real human in the loop will increasingly be the places where you win. Not everywhere efficiency still matters for routine tasks but, wherever trust, judgment, or interpretation are at stake, the human premium will dominate.

Ask yourself: when was the last time you preferred an answering machine to a human voice? The answer is never. Because something essential was absent. That same absence is now detectable in AI-generated content.

The information deluge will only amplify this

The supply of generated content is exploding. As it does, attention shifts from deep evaluation to coarse origin-filtering. The more AI content there is, the faster people will rely on signs of human authorship visible struggle, identifiable perspective, continuity over time.

And those signals are becoming persistent. Future platforms will likely make production methods transparent. An “AI-generated” label may not just be a policy; it could be an automatic metadata layer. Content won’t just be judged on what it is, but on how it was made. Reputation will have a memory.

How to use AI without triggering the effect

All of this leads to a practical fork. Two ways to use AI: substitution or amplification.

| Mode | What it does | Long-term risk |

|---|---|---|

| Substitution | AI replaces your work | High rejection, low differentiation |

| Concealed substitution | AI replaced but you hide it | Unstable; betrayal effect amplified |

| Assisted amplification | AI extends your thinking | Low; your voice remains central |

| Integrated amplification | You and the AI co-evolve ideas | Lowest; process is visible |

Substitution produces the kind of low-effort, interchangeable output that the Answering Machine Effect is built to detect. Amplification, especially the integrated kind, preserves the one thing that can’t be faked: the arc of your own thinking. Show your work. Leave the scars. That’s what people recognize as real.

A quick test of your own orientation

These aren’t abstract questions. They’re diagnostic.

- The label test. A document carries the badge AI-Generated Content. What’s your immediate gut response continue, or withdraw?

- The interaction test. For a meaningful conversation, do you choose human or chatbot? Are they even comparable in your mind?

- The engagement test. Spend an hour with an AI. Does the interaction deepen, or does it feel hollow by the end?

There’s no right answer. But the pattern is yours to notice. It tells you where you actually stand, and it’s that intuition not any tool or checklist that will guide your own use of AI.

The ending that brings us back to the beginning

Human beings don’t just exchange information.

We live in stories. We think in stories. We remember in stories.

Everything we understand about the world is tied to a narrative where we came from, what happened to us, what changed us, and why it mattered. Even our own sense of self is a story we tell and retell, often incomplete, sometimes wrong, sometimes invented.

But it is still ours.

That’s the difference.

When you hear a human voice, you’re not just hearing words. You’re hearing the weight behind them the history, the contradictions, the things that shaped them. You’re hearing a story, even if it’s only implied.

AI doesn’t have that.

It can produce the signal. It can reproduce the pattern. But it has no story behind it. Nothing lived, nothing risked, nothing changed to produce the output.

And that absence is what you feel.

That’s what the answering machine revealed, long before AI ever existed.

That’s the line.

Between something that was generated… and something that was lived.

And until systems begin to grow with us remembering, changing, accumulating a real trajectory through time that line will remain visible.

We don’t just want answers.

We want the story that made them possible.

Closing

There is no single correct position on AI.

Different people will arrive at different conclusions. Some will embrace it fully. Some will reject it entirely. Most will find some intermediate position.

All of these responses are reasonable.

The analysis in this paper suggests a simpler distinction:

If the machine can do something well, there is no advantage in doing it yourself. That is what the tool is for. But the machine cannot replicate:

- your perspective

- your judgment

- your experience

- your trajectory through time

It cannot produce your story. This leads to a practical question: Not how much AI should you use.

But:

what do you want to remain yours?

The answer varies by person. It may vary over time. The framework presented here does not prescribe an outcome. It provides a basis for determining your own position.

The Answering Machine Effect describes a detection mechanism one that operates rapidly and persists once triggered. Whether that mechanism activates in your own engagement with AI is not a question this paper can answer.

But it is a question worth asking.

Because the answer determines not whether you will use AI.

But how.

📚 Further Reading

This post draws on several established ideas in human perception and interaction:

- The Uncanny Valley (Masahiro Mori)

- The ELIZA Effect (Joseph Weizenbaum)

- Computers Are Social Actors (CASA) (Clifford Nass)

- Expectation Violation Theory (Judee Burgoon)

- The Effort Heuristic

- Thin-slicing and rapid judgment (Malcolm Gladwell)

- Narrative cognition in The Storytelling Animal (Jonathan Gottschall)

- Human narrative structures in Sapiens (Yuval Noah Harari)

- The concept of Information Overload (Alvin Toffler)

These frameworks help explain why the Answering Machine Effect emerges but do not fully capture it.