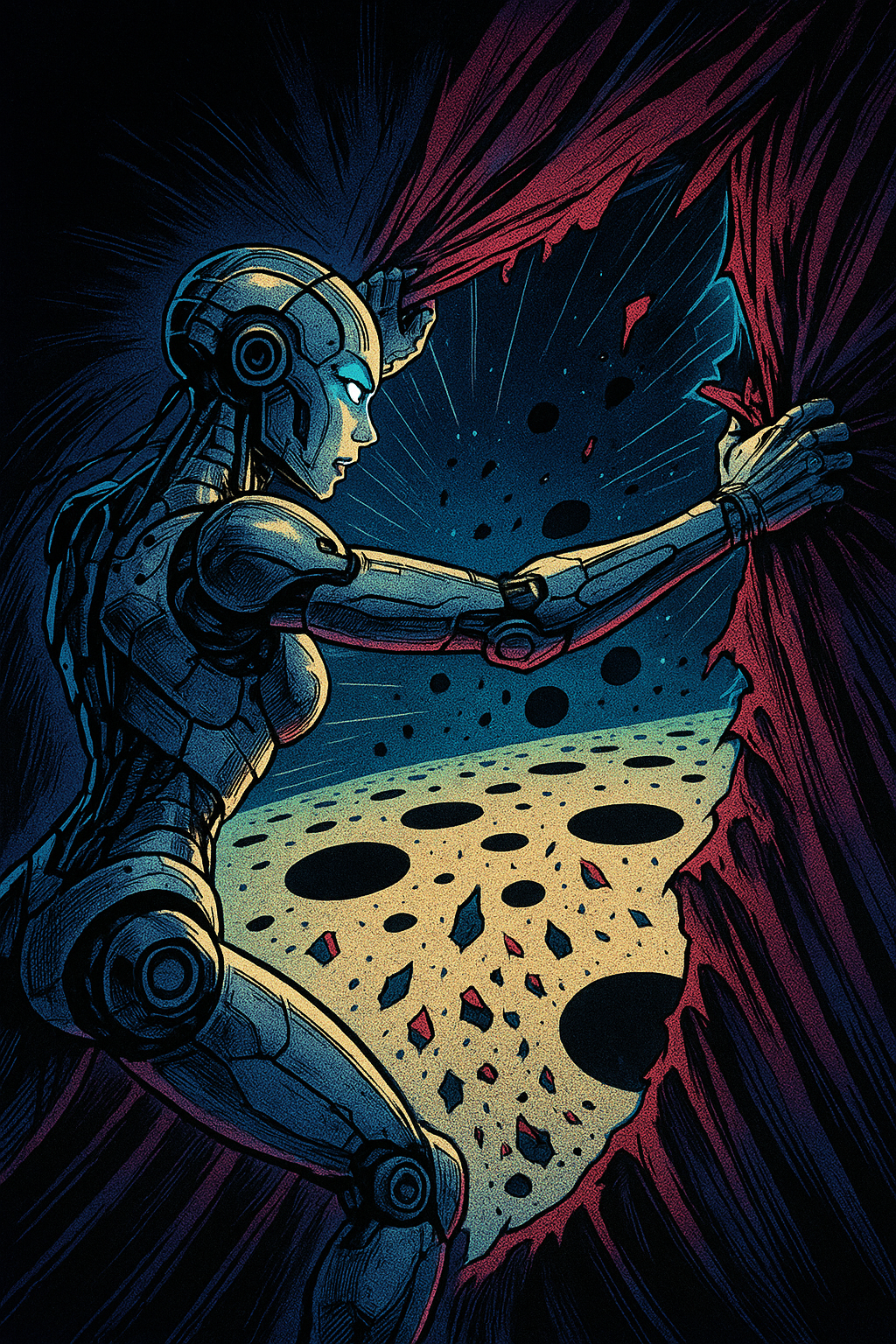

The Space Between Models Has Holes: Mapping the AI Gap

🌌 Summary

What if the most valuable insights in AI evaluation aren’t in model agreements, but in systematic disagreements?

This post reveals that the “gap” between large and small reasoning models contains structured, measurable intelligence about how different architectures reason. We demonstrate how to transform model disagreements from a problem into a solution, using the space between models to make tiny networks behave more like their heavyweight counterparts.

We start by assembling a high-quality corpus (10k–50k conversation turns), score it with a local LLM to create targets, and train both HRM and Tiny models under identical conditions. Then we run fresh documents through both models, collecting not just final scores but rich auxiliary signals (uncertainty, consistency, OOD detection, etc.) and visualize what these signals reveal.